Using APIs as the only bridge between your multi-agent system and data sources leaves a wide margin to optimize time, money, and strengthen governance. Among all possible alternatives, the Model Context Protocol (MCP) is the best solution.

Orchestrating multiple agents to interact in a standardized way with external tools and platforms is the foundation of MCP. At Crazy Imagine Software, we work on it to accelerate hundreds of projects. Discover its impact on your business.

The critical problem of Large Language Models (LLMs)

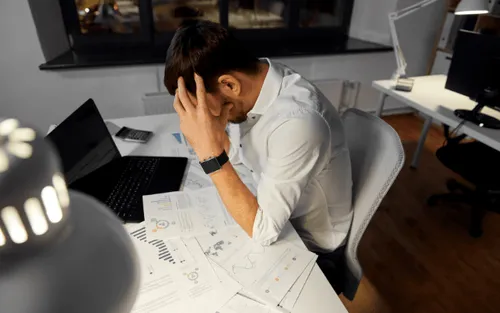

LLMs stand out when they work in isolation: they understand, reason, and generate responses with a quality that already competes with human experts in many specific tasks.

The inconvenience appears when you try to insert them into your real architecture. By default, they are models disconnected from the state of your systems, your databases, and your business workflows.

This translates into three direct frictions for you as a CTO:

- LLMs respond with outdated or incomplete information because they cannot see your systems of record or your real-time events.

- They cannot execute actions on their own (create tickets, move money, update a CRM) without an additional layer of specific integration.

- Scaling from a chatbot to a multi-agent system that interacts with dozens of tools implies writing and maintaining a jungle of ad hoc integrations that are difficult to version and govern.

In practice, this turns many AI projects into islands: promising pilots that never reach production or that remain limited to superficial automations.

MCP: a secure bridge between LLMs and the world

The Model Context Protocol is a standard that defines how an AI agent discovers, understands, and uses external tools and data sources, without coupling to specific implementations.

Instead of connecting each agent to each API in a handcrafted way, MCP establishes a common language between models, clients, and servers that simplifies multi-agent orchestration.

There are three key principles that will help us understand how MCP works and why it serves as a common language that will streamline your workflows.

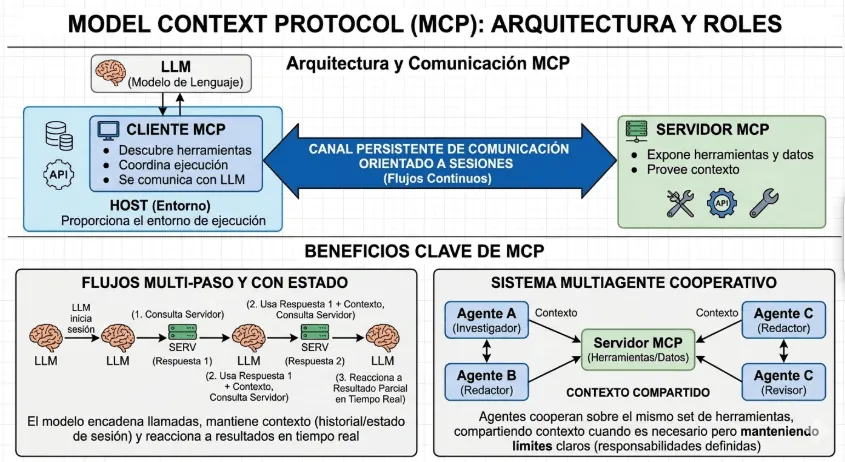

Client-server architecture

MCP is organized around three roles: clients, servers, and hosts, connected through a persistent communication channel oriented to sessions, not simple isolated calls.

In this scheme, the MCP server exposes tools and data; the MCP client discovers them, coordinates their execution, and communicates with the LLM; the host provides the environment where these clients live.

This client-server architecture enables multi-step and stateful flows: the model can chain multiple calls, maintain context between them, and react to partial results in real time.

In a multi-agent system, this translates into agents that cooperate over the same set of tools, sharing context when necessary but maintaining clear boundaries between responsibilities.

Standardized connection

At a technical level, MCP relies on JSON-RPC 2.0 to structure the serialization of tool calls, parameters, and responses.

Any MCP server that follows the specification can connect with any compatible MCP client, without your team having to reinvent the interface each time.

For your architecture, this is equivalent to moving from point-to-point integrations to a logical capability bus: agents do not “talk” to each API, they speak MCP, and the servers translate that language into concrete actions on your systems.

The result is a drastic reduction in the complexity of onboarding new tools and in the maintenance cost of existing ones.

Direct and secure access to external tools

The Model Context Protocol enables the LLM to access corporate data and execute business tools without exposing credentials or unnecessarily expanding the attack surface. We are talking about:

- Querying databases.

- Manipulating files.

- Invoking internal services

The MCP server acts as a guardian: it validates which resources are available, under what permissions and controls before executing any action.

Each request is accompanied by context (what the model wants to do and why), and the MCP server decides whether it is allowed, requires human review, or is blocked.

It is a pattern that enables robust flows, such as agents modifying configurations or writing into critical systems, while maintaining a clear line of auditability and centralized security of your assets policies.

How does MCP immediately impact your operations?

According to data from Pulse, the total number of MCP servers grew from about 100 in November 2024 to around 4000 by May 2025. This exponential growth in just a few months is a clear signal of the qualitative leap this protocol represents.

Different sectors are incorporating the Model Context Protocol through companies that invest in truly intelligent automations and frictionless integrations in real workflows. This is how MCP manifests in ROI.

Greater scalability and modularity in processes

Without MCP, each new agent or integration increases coupling and brings you closer to the “intelligent monolith”: an AI solution that is difficult to scale, test, and version.

With MCP, you can design your agent ecosystem more like a microservices architecture: small, well-defined capabilities that are easily combinable through a common protocol.

This allows you to selectively scale the components that actually handle more load without touching the rest. It also enables different teams to work on different MCP servers, maintaining autonomy while respecting the same communication contract.

Lower complexity in integrations

Each API integration introduces more points of failure: version changes, specific data formats, inconsistent error handling, among others.

MCP reduces this complexity by providing an abstraction layer where models see a standard catalog of tools, with signatures and descriptions that follow the same pattern.

It is no longer necessary to adapt each agent’s behavior to the idiosyncrasies of each API, since your agents work with MCP descriptions, and the servers encapsulate the specific logic.

Consolidation of security and governance

The security of your assets tends to fragment when multiple agents interact with different APIs. Each integration has its own way of handling credentials, permissions, and logs, which makes governance and traceability more difficult.

The Model Context Protocol allows you to centralize that logic again in MCP servers, which act as a control layer where you define what each model can do, in what context, and under what conditions.

From a governance perspective, this means:

- Consistent policies for access to sensitive data and critical operations, without replicating rules across dozens of different integrations.

- Unified auditing of what each agent did, which tools it invoked, and with what parameters, facilitating regulatory compliance.

- A clear path to introduce human approvals in high-risk operations without breaking the overall automation flow.

Accelerated experimentation

Once your stack incorporates MCP, experimenting stops being synonymous with large investments in integration projects every time you want to test a provider or a new type of agent.

With this approach, you can register new tools in an MCP server, expose them to the catalog that models see, and start testing flows with real impact in a matter of days, not months.

This enables a continuous R&D dynamic: you iterate on prompts, agent roles, and tool compositions within the same protocol and observability framework.

For your business, the result is a renewable capacity to test hypotheses at a very low marginal cost without compromising the stability of the existing core.